The Y-axis denotes the loss values at a given pt. In the case when object is not a bike, the pt is 0.4 ( 1 − 0.6 ) 0.4 (1-0.6) 0. As an example, let’s say the model predicts that something is a bike with probability 0.6 0.6 0. The X-axis or “probability of ground truth class” (let’s call it pt) is the probability that the model predicts for the ground truth object. In the graph, “blue” line represents Cross-Entropy Loss. Let’s understand the graph below which shows what influences hyperparameters α \alpha α and γ \gamma γ has on Focal Loss and in turn understand them. Important point to note is when γ = 0 \gamma = 0 γ = 0, Focal Loss becomes Cross-Entropy Loss. The only difference between original Cross-Entropy Loss and Focal Loss are these hyperparameters: alpha( α \alpha α) and gamma( γ \gamma γ). To calculate total Focal Loss per sample, sum over all the classes. It has omitted the $$\sum$$ that would sum over all the classes $$C$$. The above definition is Focal Loss for only one class. Focal Loss allows the model to take risk while making predictions which is highly important when dealing with highly imbalanced datasets. This can be fixed by Focal Loss, as it makes easier for the model to predict things without being 80 − 100 80-100% 8 0 − 1 0 0 sure that this object is something. BCE needs the model to be confident about what it is predicting that makes the model learn negative class more easily they are heavily available in the dataset.

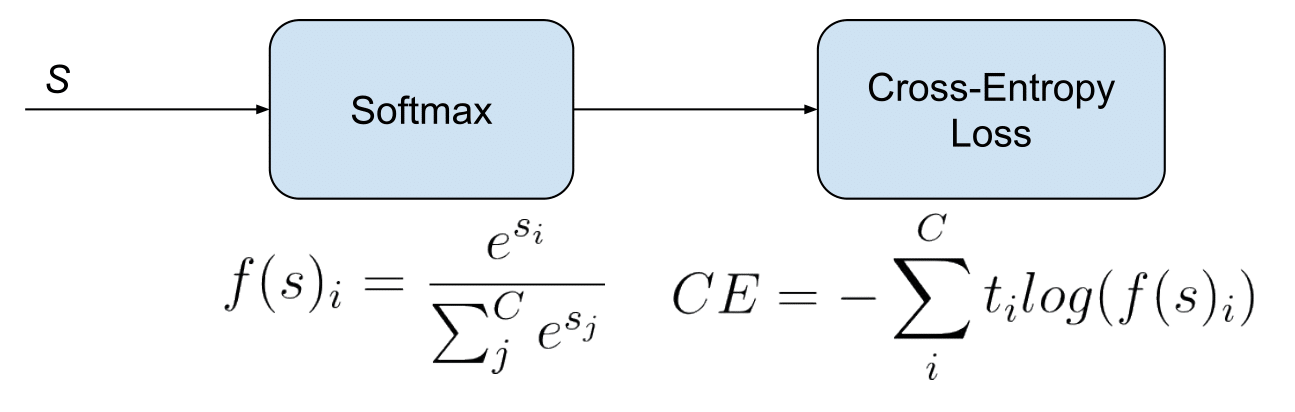

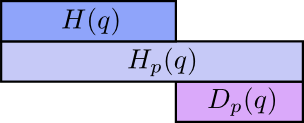

Using Binary Cross-Entropy Loss for training with highly class imbalance setting doesn’t perform well. Object detection algorithms evaluate about 1 0 4 10^4 1 0 4 to 1 0 5 10^5 1 0 5 candidate locations per image but only a few locations contains objects and rest are just background objects. Object detection is one of the most widely studied topics in Computer Vision with a major challenge of detecting small size objects inside images. It is applied independently to each element of vector s s s.į ( s i ) = 1 1 + e − s i f(s_i) = \fract_ilog(f(s)_i) L o s s = i = 1 ∑ i = C B C E ( t i , f ( s ) i ) = i = 1 ∑ i = C t i l o g ( f ( s ) i ) Focal Lossįocal Loss was introduced in Focal Loss for Dense Object Detection paper by He et al (at FAIR). It squashes a vector in the range ( 0, 1 ) (0,1) ( 0, 1 ). The outputs after transformations represents probabilities of belonging to either one or more classes based on multi-class or multi-label setting. These functions are transformations applied to vectors coming out from the deep learning models before the loss computation. One intutive way to understand multi-label classification is to treat multi-label classification as C C C different binary and independent classification problem where each output neuron decides if a sample belongs to a class or not. The aim is to minimize the loss, i.e, the smaller the loss the better the model. The target vector t t t can have more than a positive class, so it will be a vector of 0 0 0s and 1 1 1s with C C C dimensionality where 0 0 0 is negative and 1 1 1 is positive class. Cross-entropy loss is used when adjusting model weights during training. Unlike in multi-class classification, here classes are not mutually exclusive. The deep learning model will have C C C output neurons. This task is treated as a single classification problem of samples in one of C C C classes.Įach data point can belong to more than one class from C C C classes. The deep learning model will have C C C output neurons depicting probability of each of the C C C class to be positive class and it is gathered in a vector s s s (scores). All the C C C classes are mutually exclusives and no two classes can be positive class. The target (ground truth) vector t t t will be a one-hot vector with a positive class and C − 1 C-1 C − 1 negative classes. Each data point can belong to ONE of C C C classes. Cross-Entropy Loss is used in a supervised setting and before diving deep into CE, first let’s revise widely known and important concepts: Classifications We will also implement Focal Loss in PyTorch.Ĭross-Entropy loss has its different names due to its different variations used in different settings but its core concept (or understanding) remains same across all the different settings. Later in the post, we will learn about Focal Loss, a successor of Cross-Entropy(CE) loss that performs better than CE in highly imbalanced dataset setting. In this blogpost we will understand cross-entropy loss and its various different names.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed